Archive for the ‘ACM_ICN’ tag

The Metaverse as an Information-Centric Network

This is an introduction to our paper:

- Dirk Kutscher, Jeff Burke, Giuseppe Fioccola, Paulo Mendes; Statement: The Metaverse as an Information-Centric Network; 10th ACM Conference on Information-Centric Networking (ACM ICN '23); October 9 — 10, 2023, Reykjavik, Iceland; https://dl.acm.org/doi/10.1145/3623565.3623761; pre-print available at http://arxiv.org/abs/2309.09147

The Web Today

The Web today has a specific technical definition: it includes presentation layer technologies, protocols, agreed-upon ways of achieving certain semantics such as Representational State Transfer (REST), and security infrastructure. However, from a user perspective, it can be viewed as a universe of consistently navigable content and (occasionally) interoperable services. The user experience and architectural underpinnings have evolved in parallel and have influenced each other: for many end users, the Web and the network are synonymous. Rather than building up "Metaverse" as an application domain based on IP, we aim to explore "the Metaverse" as strongly intertwined with ICN, just as the modern concept of the Web and its technology stack are inseparable for a broad set of applications.

As a placeholder name for a range of new technologies and experiences, "the Metaverse" is even less well-defined than the Web. We adopt the commonly used concept of a shared, interoperable, and persistent XR. Some descriptions and early prototypes for social AR/VR systems suggest leveraging existing Internet and Web protocols to provide Metaverse services, without addressing the technical complexity and centralization of control required to provide the underlying cloud service infrastructure.

Metaverse as an Information-Centric Concept

Here, we do not take as given current designs and deployment models that consider the Metaverse as an overlay application with corresponding infrastructure dependencies, as this exacerbates the current gaps (and the resulting costs and technical complexity) between distributed applications and the underlying network architecture. Instead, we assume a fundamentally information/centric system in which most applications participate in granular 3D content exchange, context-aware integration with the physical world, and other Metaverse-relevant services.

"The Metaverse" is an information-centric concept that likely will become synonymous with the network itself. We argue that reciprocal design of the network and applications will open new opportunities for the deployment of Metaverse-suggestive experiences even today.

Experientially, this Metaverse is an extension of the Web into immersive XR modalities that are often aligned with physical space, as in augmented reality (AR). We conceive the Metaverse not only as a shared XR environment, but the next generation of the web, extending into 3D interaction/immersion and optionally overlaid on physical spaces. Instead of rendering data objects into a 2D page (within a tab within a window) on a device, we envision such objects being rendered into a shared 3D space, interacting among each other and with end users.

Architecturally, leveraging ICN concepts provides support for decentralized publishing, content interoperability and co-existence, based on general building blocks and not within separated application silos as today's initial prototypes. We claim that such properties are required to achieve the generally circulated visions of Metaverse systems, but are not achievable today because of the host- and connection-centric way in which the web operates and is presented to users in browsers.

ICN Capabilities

We point out four ICN capabilities critical to Metaverse concepts:

- scalable and robust multi-destination communication, overcoming IP multicast challenges, such as inter-domain routing, scalability, and routing communication overhead;

- leveraging wireless broadcast to support shared local views and low-latency interactivity without application-awareness in edge routers;

- privacy, selective attention, content filtering, and autonomous interactions, as well as ownership and control on the publishing side; and

- supporting in-network processing for objects replication and transformation.

Interactive Holographic Communication

For example, imagine interactive holographic communication consisting of participants' 3D video, spatial audio, and shared 3D documents. In ICN, such an application can represent virtual content as secure data objects and share them efficiently in a larger group of peers, fetching only the data necessary to reconstruct a suitable representation while being aware of the constraints of user devices and access networks.

Furthermore, while experiencing 3D objects shared by the group, each participant may also interact in the same XR environment with personal services such as wayfinding, messaging, and Internet of Things (IoT) device status. Interactions between private and shared 3D objects would be simplified if these objects use similar conventions but with different security. This concept is semantically well-aligned with ICN properties, particularly for security, as it revolves around object-level data exchange rather than hosts or channels. Integration and interoperability within a shared XR environment, without centralization, is challenging if one has to negotiate not only data interactions but also the underlying service connections and security relationship using host-centric paradigms. It also exacerbates the impact of intermittent connectivity on interactivity when the global network is required for functions such as rendezvous -- that are handled locally in ICN.

Creating Shared Environments

As a second example, consider creating a shared environment -- e.g., to pre-visualize engineering models of an aircraft – from a collection of collaboratively edited 3D documents. Imagine component documents interacting in a simulation. Documents can be modularized, linked, and overlaid in a web-like manner. Today, such cross-platform interoperability and visualization without centralized hubs is impractical, and it is difficult to create secure, granular data flows required for interaction between co-existing 3D elements to "bring them to life" in a virtual world. In an ICN approach, such modules could be independently authored and published, shared between applications, becoming building blocks of a richer, interacting system of user- and machine-generated content.

We introduce some technical challenges and research direction in our paper (link below).

Further Reading

The Metaverse as an Information-Centric Network

- Dirk Kutscher, Jeff Burke, Giuseppe Fioccola, Paulo Mendes; Statement: The Metaverse as an Information-Centric Network; 10th ACM Conference on Information-Centric Networking (ACM ICN '23); October 9 — 10, 2023, Reykjavik, Iceland; https://doi.org/10.1145/3623565.3623712; pre-print available at http://arxiv.org/abs/2309.09147

- Giuseppe Fioccola , Paulo Mendes , Jeff Burke , Dirk Kutscher;

Information-Centric Metaverse; Internet Draft draft-fmbk-icnrg-metaverse-01; Work in Progress; July 2023 - Jeff Burke, Lixia Zhang, Dirk Kutscher; Named Data Microverse project

- Dirk Kutscher, Jeff Burke, Paulo Mendes, Michelle Munson, Todd Hodes; Named Data Metaverse Panel at NDNComm-2023

- Dirk Kutscher, Lixia Zhang, Jeff Burke, Dave Oran; IEEE MetaCom Workshop on Decentralized, Data-Oriented Networking for the Metaverse (DORM); IEEE Metacom-2023

- Dirk Kutscher, Dave Oran; Statement: RESTful Information-Centric Networking; ACM Conference on Information-Centric Networking (ICN 2022); Osaka, Japan; September 2022; https://dirk-kutscher.info/publications/icn-rest/

References

- Cheng, R., Wu, N., Varvello, M., Chen, S., and Han, B; Are we ready for metaverse?: a measurement study of social virtual reality platforms; In Proceedings of the 22nd ACM Internet Measurement Conference, IMC 2022, Nice, France; October 25-27, 2022 (2022); https://dl.acm.org/doi/10.1145/3517745.3561417

- Erickson, L; Interoperability in the immersive web – part 1; https://hubs.mozilla.com/labs/interoperability-in-the-immersive-web/, Feb 2023.

- Fielding, R. T.; Architectural Styles and the Design of Network-based Software Architectures; PhD thesis, University of California, Irvine, 2000. http://www.ics.uci.edu/fielding/pubs/dissertation/top.htm

- Gruessing, J., and Dawkins, S; Media over quic - use cases and requirements for media transport protocol design; Internet-Draft https://datatracker.ietf.org/doc/draft-ietf-moq-requirements/, version 01; IETF Secretariat, July 2023.

- Jennings, C. F., Nandakumar, S., and Huitema, C. Quicr – media delivery protocol over quic. Internet-Draft https://datatracker.ietf.org/doc/draft-jennings-moq-quicr-proto/, version 01, IETF Secretariat, January 2023.

- LAMINA1. Decentralized system services for the open metaverse; https://uploads-ssl.webflow.com/63fe332d7b9ae4159d741e55/64499d8f08bd5bdd1fe6bce1_MaaS_Whitepaper_v1.0.pdf

- Moll, P., Patil, V., Wang, L., and Zhang, L.; The evolution of distributed dataset synchronization solutions in NDN: sok; In 9th ACM Conference on Information-Centric Networking; ICN 2022; Osaka Japan; September 19-21, 2022 (2022); https://dl.acm.org/doi/10.1145/3517212.3558092

- Moore, M. B. T.; How we ruined the internet; CoRR abs/2306.01101 (2023); https://arxiv.org/abs/2306.01101

- NVIDIA. What is universal scene description; https://developer.nvidia.com/usd.

- Oran, D. R.; Considerations in the Development of a QoS Architecture for CCNx-Like Information-Centric Networking Protocols; RFC 9064; June 2021; https://datatracker.ietf.org/doc/rfc9064/

- Patil, V., Desai, H., and Zhang, L; Kua: A distributed object store over named data networking; In Conference on Information-Centric Networking, ICN 2022, Osaka Japan, September 19-21, 2022 (2022); https://dl.acm.org/doi/10.1145/3517212.3558083

- Radoff, J.; Metaverse interoperability, part 1: Challenges. https://medium.com/building-the-metaverse/metaverse-interoperabilitypart-1-challenges-716455ca439e, Apr 2022.

- Khronos Group; glTF runtime 3d asset delivery; https://www.khronos.org/gltf/

- Yu, Y., Afanasyev, A., Clark, D., claffy, k., Jacobson, V., and Zhang, L.; Schematizing trust in named data networking; In Proceedings of the 2nd ACM Conference on Information-Centric Networking (New York, NY, USA, 2015), ACMICN ’15, Association for Computing Machinery; https://dl.acm.org/doi/10.1145/2810156.2810170

Distributed Computing in Information-Centric Networking

This is an introduction to our paper:

- Wei Geng, Yulong Zhang, Dirk Kutscher, Abhishek Kumar, Sasu Tarkoma, Pan Hui; Sok: Distributed Computing in ICN; 10th ACM Conference on Information-Centric Networking (ACM ICN '23); October 9 — 10, 2023, Reykjavik, Iceland; https://doi.org/10.1145/3623565.3623712; pre-print available at https://arxiv.org/abs/2309.08973.

Distributed computing is the basis for all relevant applications on the Internet. Based on well-established principles, different mechanisms, implementations, and applications have been developed that form the foundation of the modern Web.

The Internet with its stateless forwarding service and end-to-endcommunication model promotes certain types of communication for distributed computing. For example, IP addresses and/or DNS names provide different means for identifying computing components. Reliable transport protocols (e.g., TCP, QUIC) promote interconnecting modules. Communication patterns such as REST and protocol implementations such as HTTP enable certain types of distributed computing interactions, and security frameworks such as TLS and the web PKI constrain the use of public-key cryptography for different security functions.

From Distributed Computing...

Distributed computing has different facets, for example, client-server computing, web services, stream processing, distributed consensus systems, and Turing-complete distributed computing platforms. There are also different perspectives on how distributed computing should be implemented on servers and network platforms, a research area that we refer to as Computing in the Network. Active Networking, one of the earliest works on computing in the network, intended to inject programmability and customization of data packets in the network itself; however, security and complexity considerations proved to be major limiting factors, preventing its wider deployment.

Dataplane programmability refers to the ability to program behavior, including application logic, on network elements and SmartNICs, thus enabling some form in-network computing. Alternatively, different types of server platforms and light-weight execution environments are enabling other forms of distributing computation in networked systems, such as architectural patterns, such as edge computing.

... To Computing in the Network

With currently available Internet technologies, we can observe a relatively succinct layering of networking and distributed computing, i.e., distributed computing is typically implemented in overlays with Content Distribution Networks (CDNs) being prominent and ubiquitous example. Recently, there has been growing interest in revisiting this relationship, for example by the IRTF Computing in the NetworkResearch Group (COINRG) – motivated by advances in network and server platforms, e.g., through the development of programmable data plane platforms and the development of different types of distributed computing frameworks, e.g., stream processing and microservice frameworks.

This is also motivated by the recent development of new distributed computing applications such as distributed machine learning (ML), and emerging new applications such as Metaverse suggest new levels of scale in terms of data volume for distributed computing and the pervasiveness of distributed computing tasks in such systems. There are two research questions that stem from these developments:

-

How can we build distributed computing systems in the network that can leverage the on-path location of compute functions, e.g., optimally aligning stream processing topologies with networked computing platform topologies?

-

How can the network support distributed computing in general, so that the design and operation of such systems can be simplified, but also so that different optimizations can be achieved to improve performance and robustness?

Issues in Legacy Distributed Computing

Although there are many distributed computing applications, it is also worth noting that there are many limitations and performance issues. Factors such as network latency, data skew, checkpoint overhead, back pressure, garbage collection overhead, and issues related to performance, memory management, and serialization and deserialization overhead can all influence the efficiency. Various optimization techniques can be implemented to alleviate these issues, including memory adjustment, refining the checkpointing process, and adopting efficient data structures and algorithms.

Some performance problems and complexity issues stem from the overlay nature of current systems and their way of achieving the above-mentioned mechanisms with temporary solutions based on TCP/IP and associated protocols such as DNS. For example, Network Service Mesh has been characterized as architecturally complex because of the so-called sidecar approaches and their implementation problems.

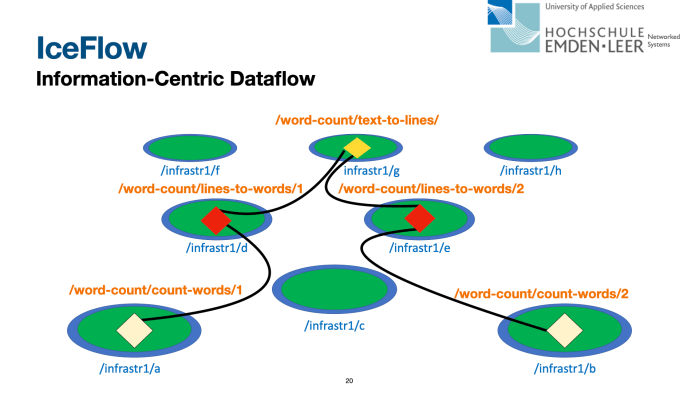

In systems that are layered on top of HTTP or TCP (or QUIC), compute nodes typically cannot assess the network performance directly – only indirectly through observed throughput and buffer under-runs. Information-centric data-flow systems, such as IceFlow, intend to provide better visibility and thus better joint optimization potential by more direct access to data-oriented communication resources. Then, some coordination tasks that are based on exchanging updates of shared application state can be elegantly mapped to named data publication in a hierarchical namespace, as the different dataset synchronization (Sync) protocols in NDN demonstrated.

Information-Centric Distributed Computing

In our paper on Distributed Computing in ICN at ACM ICN-2023, we focus on distributed computing and on how information-centricity in the network and application layer can support the development and operation of such systems. The rich set of distributed computing systems in ICN suggests that ICN provides some benefits for distributed computing that could offer advantages such as better performance, security, and productivity when building corresponding applications.

ICN with its data-oriented operation and generally more powerful forwarding layer provides an attractive platform for distributed computing. Several different distributed computing protocols and systems have been proposed for ICN, with different feature sets and different technical approaches, including Remote Method Invocation (RMI) as an interaction model as well as more comprehensive distributed computing platforms. RMI systems such as RICE leverage the fundamental named-based forwarding service in ICN systems and map requests to Interest messages and method names to content names (although the actual implementation is more intricate). Method parameters and results are also represented as content objects, which provides an elegant platform for such interactions.

ICN generally attempts to provide a more useful service to data-oriented applications but can also be leveraged to support distributed computing specifically.

Names

Accessing named data in the network as a native service can remove the need for mapping application logic identifiers such as function names to network and process identifiers (IP addresses, port numbers), thus simplifying implementation and run-time operation, as demonstrated by systems such as Named Function Networking (NFN), RICE, and IceFlow. It is worth noting that, although ICN does not generally require an explicit mapping of names to other domain identifiers, such networks require suitable forwarding state, e.g., obtained from configuration, dynamic learning, or routing.

Data-orientedness

ICN's notion of immutable data with strong name-content binding through cryptographic signatures and hashes seems to be conducive to many distributed computing scenarios, as both static data objects and dynamic computation results in those systems such as input parameters and result values can be directly sent as ICN data objects. NFN has first demonstrated this.

Securing distributed computing could be supported better in so far as ICN does not require additional dependencies on public-key or pipe securing infrastructure, as keys and certificates are simply named data objects and centralized trust anchors are not necessarily needed. Larger data collections can be aggregated and re-purposed by manifests (FLIC), enabling "small" and "big data" computing in one single framework that is congruent to the packet-level communication in a network. IceFlow uses such an aggregation approach to share identical stream processing results objects in multiple consumer contexts.

Data-orientedness eliminates the need for connections; even reliable communication in ICN is completely data-oriented. If higher-layer (distributed computing) transactions can be mapped to the network layer data retrieval, then server complexity can be reduced (no need to maintain several connections), and consumers get direct visibility into network performance. This can enable performance optimizations, such as linking network and computing flow control loops (one realization of joint optimization), as showed by IceFlow.

Location independence and data sharing

Embracing the principle of accessing named and authenticated data also enables location independence, i.e., corresponding data can be obtained from any place in the network, such as replication points (repos) and caches. This fundamentally enables better multi-source/path capabilities as well as data sharing, i.e., multiple data retrieval operations for one named data object by different consumers can potentially be completed by a cache, repo, or peer in the network.

Stateful Forwarding

ICN provides stateful, symmetric forwarding, which enables general performance optimizations such as in-network retransmissions, more control over multipath forwarding, and load balancing. This concept could be extended to support distributed computing specifically, for example, if load balancing is performed based on RTT observations for idempotent remote-method invocations.

More Networking, less Management

The combination of data-oriented, connection-less operation, and stateful (more powerful) forwarding in ICN shifts functionality from management and orchestration layers (back) to the network layer, which can enable complexity reduction, which can be especially pronounced in distributed computing. For example, legacy stream processing and service mesh platforms typically must manage connectivity between deployment units (pods in Kubernetes). In Apache Flink, a central orchestrator manages the connections between task managers (node agents). Systems such as IceFlow have demonstrated a more self-organized and decentralized stream-processing approach, and the presented principles are applicable to other forms of distributed computing.

In summary, we can observe that ICN's general approach of having the network providing a more natural (data retrieval) platform for applications benefits distributed computing in similar ways as it benefits other applications. One particularly promising approach is the elimination of layer barriers, which enables certain optimizations.

In addition to NFN, there are other approaches that jointly optimize the utilization of network and computing resources to provide network service mesh-like platforms, such as edge intelligence using federated learning, advanced CDNs where nodes can dynamically adapt to user demands according to content popularity, such as iCDN and OpenCDN, and general computing systems, such as Compute-First Networking, IceFlow, and ICedge.

Our paper on Distributed Computing in ICN at ACM ICN-2023 provides a comprehensive analysis and understanding of distributed computing systems in ICN, based on a survey of more than 50 papers. Naturally, these different efforts cannot be directly compared due to their difference in nature. We categorized different ICN distributed computing systems, and individual approaches and highlighted their specific properties.

The scope of this study is technologies for ICN-enabled distributed computing. Specifically, we divide the different approaches into four categories, as shown in the figure above: enablers, protocols, orchestration, and applications. The contributions of this study are as follows:

- A discussion of the benefits and challenges of distributed computing in ICN.

- A categorization of different proposed distributed computing systems in ICN.

- A discussion of lessons learned from these systems.

- A discussion of existing challenges and promising directions for future work.

Recent Research on Distributed Computing in ICN

I am providing some pointers to my previous research on distributed computing in ICN below.

The paper that has led to this article:

- Wei Geng, Yulong Zhang, Dirk Kutscher, Abhishek Kumar, Sasu Tarkoma, Pan Hui; Sok: Distributed Computing in ICN; 10th ACM Conference on Information-Centric Networking (ACM ICN '23); October 9 — 10, 2023, Reykjavik, Iceland; https://doi.org/10.1145/3623565.3623712; pre-print available at https://arxiv.org/abs/2309.08973.

Current work in the Computing in the Network Research Group of the IRTF:

- Dirk Kutscher, Teemu Kärkkäinen, Jörg Ott; Directions for Computing in the Network; Internet Draft draft-irtf-coinrg-dir-00, Work in Progress; August 2023

Reflexive Forwarding and Remote Method Invocation

Providing a unified remote computation capability in ICN presents some unique challenges, among which are timer management, client authorization, and binding to state held by servers, while maintaining the advantages of ICN protocol designs like CCN and NDN. In the RICE work,we developed a unified approach to remote function invocation in ICN that exploits the attractive ICN properties of name-based routing, receiver-driven flow and congestion control, flow balance, and object-oriented security while presenting a natural programming model to the application developer. The RICE protocol is leveraging an ICN extension called Reflexive Forwarding that provides ICN-idiomatic method parameter transmission.

- RICE: Remote Method Invocation in ICN (best paper award at ACM ICN-2018)

- Reflexive Forwarding in ICN

Distributed Computing Frameworks

Leveraging RICE as a mechanism, we have developed Compute-First Networking (CFN) in ICN, a Turing-complete distributed computing platform. IceFlow is a proposal for Dataflow in ICN in a decentralized manner.

- Compute-First Networking (CFN): Distributed Computing Meets ICN

- IceFlow: Information-Centric Dataflow: Re-Imagining Reactive Distributed Computing

Applications

Based on Reflexive Forwarding, we have developed a concept for RESTful ICN that leverages CCNx key exchange for setting up security contexts and keys that could then be used for secure, data-oriented REST-like communication.

Delay-Tolerant LoRa leveraged Reflexive Forwarding to enable constrained LoRa nodes to "phone home" when they want to transmit data, thus enabling new ways (without central network and application servers) for connecting LoRa networks to the Internet.

ACM ICN-2022 Highlights

The ACM Information-Centric Networking 2022 Conference took place in Osaka from September 19 to 21 2022, hosted by Osaka University. It was a three-day conference with tutorials, one keynote, two panel session, and paper and poster/demo presentations. The highlights (with links to papers and presentations) from my perspective were the following:

Keynote by Dave Oran: Travels with ICN – The road traversed and the road ahead

Dave Oran presented an overview of his research experience over the last ten years that was informed by many seminal research contributions on ICN and his career in the network vendor sector as well as in standards and research bodies such as the IETF and IRTF.

The keynote's theme was about disentagling the application and network layer aspects of ICN, which led to interesting perspectives on some of the previous design decisions in CCNx and NDN.

As ilustrated in the figure below, the more networking-minded ICN topics are typically connected to features and challenges of building packet-forwarding networks based on the principle of accessing named data. The actual research questions are generally not different to those of IP networks (routing, mobility etc.), but ICN provides a significant potential to re-think and often improve over the specific approaches in IP networks due to its core properties such as object security and symmetric, stateful forwarding.

Information-centric applications development in contrast is often concerned with general naming concepts, namespace design, and security features that are enabled by namespace design and application layer object security such as trust schema and provenance.

The message in Dave's talk was not that these are completely disjunct areas that should best be investigated independent of each other, but rather that the ICN's fascination and disruptive potential is based on the potential for rethinking layer boundaries and contemplating a better function split between applications, network stacks on endpoints, and forwarding elements in the network. In his talk, Dave focused on

- the Interaction of consumers & networking producers of data;

- routing;

- forwarding; and

- congestion control.

He discussed many lessons learned as well as open research and new ideas for all of these topics – please refer to the presentation slides for details.

One particularly interesting current ICN research topic is distributed computing and ICN architectures & interaction models for that. ICN's name-based forwarding model and object security provide very interesting options for simplifying systems such as microservices, RESTful services and distributed application coordination. Alluding to our work on Reflexive Forwarding, Dave offered two main lessons learned from building corresponding communication abstractions:

-

Content fetch with two-way handshakes is a poor match for doing distributed computations.

-

Extensions to the base protocols can give a flexible underpinning for multiple interaction models

This raises the question of the slim waist of ICN, i.e., as research progresses, what should be the minimal feature set and what is the right extensibility model?

Dave concluded his talk with a few interesting questions:

-

how can the networking insights we’ve gained from ICN protocols inform the construction of Information Centric systems and applications?

- Whether and how to utilize name-based routing to achieve robustness and performance scaling for distributed applications?

- Where does caching help or not help and how to best utilize caches?

- Does pushing Names down to lower layers help latency? Resilience? Fairness?

-

How can the insights we’ve gained from applying Information Centricity in applications inform what we bother to change the network to do, and what not?

- Do things like multipath forwarding, in-network retransmission, or reflexive forwarding actually enable applications that are hard or infeasible to do without them?

- Is there a big win for wireless networks in terms of optimizing a scarce resource or having more robust and responsive mobility characteristics?

More details in the presentation slides

Panel: ICN and the Metaverse – Challenges and Opportunities

I had the pleasure of being in a panel with Jeff Burke (UCLA) and Geoff Houston (APNIC), moderated by Alexander Afanasyev (Florida International University) discussing Metaverse challenges and opportunities for ICN.

Questions on Metaverse and ICN

Large-scale interactive and networked AR/VR/XR systems are now referred to as Metaverse, and the general assumption is that corresponding applications will be hosted on platforms, similar to those that are employed for web and social media applications today.

In the web, the platform approach has led to an accelerated development and growth of a few popular mainstream systems. On the other hand, several problems have been observed such as ubiquitous surveillance, lock-in effects, centralization, innovation stagnation, and cost overhead for achieving the required performance.

While these phenomena may have both technical and economic root causes, we would like to discuss:

- How should Metaverse systems be designed, and what would be important architectural pillars?

- What is the potential for re-imagining Metaverse with information-centric concepts and protocols?

- Would ICN enable or lead to profound architecturally unique approaches – or would protocols such as NDN be a drop-in replacement for QUIC, HTTP3 etc.?

- What are the challenges for building ICN-based Metaverse systems, and what it missing in today's ICN platforms?

As input to the discussion, Jeff Burke and myself (together with Dave Oran) submitted two papers:

- Jeff Burke: Statement: As TCP/IP is to the web, ICN is to the…?

- Dirk Kutscher and David Oran: RESTful information-centric networking: statement

Research Directions

Jeff offered a list of really interesting research directions based on the notion that in the Metaverse, host-based identifiers and end-to-end connections between hosts would be abstracted even further away than in today’s web. Client devices would fade into the background in favor of the data supplanting or augmenting the real world. Thus, a metaverse consisted of information not associated with the physical world unless it needed to describe or provide interaction with it. The experiential semantics were viscerally information-centric, which would help to motivate the ICN research opportunities such as:

-

Persistence: The information forming a metaverse persists across sessions and users.

-

“Content” and Interoperability: Designing the relationships among metaverse-layer objects and the named packets that an ICN network moves and stores.

-

Naming and Spatial Organization: How to best integrate knowledge from research in databases and related fields where these challenges have been considered for decades.

-

Trust, Provenance, and Transactions: Using ICN to disentangle metaverse objects from the security provided by a source or a given channel of communication, with the named data representation secured at the time of publication instead.

RESTful ICN

In our paper on RESTFul ICN, Dave Oran and I asked the question: given that most web applications are concerned with transferring named units of data (web resources, video chunks etc.), can the REST paradigm be married with the data-oriented, receiver-driven operation of Information-Centric Networking (ICN), leveraging attractive ICN benefits such as consumer anonymity, stateful and symmetric forwarding, flow-balance in-network caching, and implicit object security?

We argue that this is feasible given some of the recent advances in ICN protocol development and that the resulting suite is simpler and potentially having better performance and robustness properties. Our sketch of an ICN based protocol framework addresses secure and efficient establishment and continuation of REST communication sessions, without giving up key ICN properties, such as consumer anonymity and flow balance.

Panel Discussion

The panel discussed the current socio-economic realities in the Internet and the Web and explored opportunities (and non-opportunities) for redesigns, and how ICN could be a potential enabler for that.

My personal view is that most of the potential dystopian outcomes of future Metaverse applications are independent from the enabling networking technology and the technology stack at large (security, naming etc.). It is really important to understand the actual objectives of a specific systems, i.e., who operates to which ends, similar to so-called social networks today. If the main objective is to create a more powerful advertising and manipulation platform, then such as system will exhibit yet unimaginable surveillance and tracking mechanisms – independent of the underlying network stack.

With respect to the technical design, I agree to Jeff Burke's proposed research directions. One particularly interesting question will be how to design a Information-Centric communication stack and corresponding APIs. I argued that it is not necessary to replicate existing interaction styles and protocol stacks from the TCP/IP (or QUIC) world. Instead it should be more interesting and productive to discuss the fundamentally needed interaction classes such as

- High-performance multi-destination transfer

- Group communication and synchronization

- High-performance session-oriented communication with servers and peers (for which we proposed RESTful ICN).

The panel then also discussed how likely non-mainstream Metaverse systems would be adopted and whether the current socio-economic environment actually allows for that level of permissionless innovation – considering the network effects that Metaverse systems would be subjected to, much in the same way as so-called social networks.

Panel: Hard Lessons for ICN from IP Multicast?

Thomas Schmidt (HAW Hamburg) moderated a panel discussion with Jon Crowcroft (University of Cambridge), Dave Oran, and George Xylomenos (Athens University of Economics and Business) as panelists.

With the continued shift towards more and more live video streaming services over the Internet, scalable multi-destination delivery has become more relevant again. For example, the recently chartered IETF Working Group on Media over QUIC (MOQ), is addressing the need for scalable multi-destination delivery and the unavailability of IP multicast as a platform by developing a QUIC-based overlay system that essentially uses information-centric concepts, albeit in a QUIC overlay network. Such system would consist of a network of QUIC proxies, connected via individual QUIC connections to emulate request forwarding and chunk-based video data distribution. Considering the non-negligible overhead and complexity one might ask the question whether live video streaming over the Internet could be served by a better approach. Questions like this are being asked by the network service provider community (ISPs have to bear a lot of the overhead and overlay complexity) as well, for example in this APNIC blog posting by Jake Holland titled Why inter-domain multicast now makes sense.

This panel was inspired by a statement paper submitted by Jon Crowcroft titled [Hard lessons for ICN from IP multicast (https://dl.acm.org/doi/10.1145/3517212.3558086). In this brief statement, Jon traced the line of thought from Internet multicast through to Information Centric Networking, and used this to outline what he thinks should have been the priorities in ICN work from the start.

The statement paper discusses a few problems with IP multicast that have been largely acknowledged such as difficulties in creating viable business models, unsolved security problems such as IP multicast being used as a DDOS platform, and interdomain multicast that proven difficult to establish due multicast routing scaling problems and the lack of robust pricing models. The second part of the paper is then some ICN work that has been addressing some of the mentioned issued.

The paper gave rise to an interesting and controversial discussion at the panel. The most important point is IMO to characterize ICN communication model correctly: it is correct that the combination of stateful forwarding, Interest aggregation, and caching enables an implicit multi-destination delivery service. It is implicit, because consumers that ask for the same units of named data within a time frame at the order of the network RTT will send equivalent Interest messages so that forwarders can multicast the data delivery to the faces they received such Interests from. In conjunction with opportunistic (or managed) caching by forwarders this would enable a very elegant multi-destination delivery services that can even cater to a wider variation of Interest sending times, as "late" Interest would be answered from caches.

This is a different service model compared to the push-based IP multicast model. ICN does not provide such as service in the first place, but is just applying its regular receiver-driven mode of operation which elegantly works well in the case of multiple consumers asking for the same data. It is probably fair to say that the ICN model caters to media-delivery use cases (one stream delivered to multiple consumers) but does not try to provide the more general IP multicast service model (Any Source Multicast). However, by extension, the ICN approach could be applied to multi-source scenarios as well – the system would build implicit delivery trees from any source to current consumers, without requiring extra machinery.

With this, if you like, simpler service model, ICN does fundamentally not inherit many of the problems that prohibit IP multicast in the Internet: the system is receiver-driven which simply eliminates DDOS threats (on the packet level). It is also not clear, whether ICN would need anything special to provide this service in inter-domain settings (except for general ICN routing in the Internet, which is a general,

but different research question).

Acknowledging this conceptual and practical difference, there are obviously other interesting research questions that ICN multi-destination delivery entails, for example performance and jitter reduction in the presence of caching and other transport questions.

Overall, a good time to talk about multi-destination delivery and to keep thinking about missing pieces and potential future work in ICN.

Enabling Distributed Applications

One paper presentation session was focused on distributed applications – a very interesting and relevant ICN research area. It featured three great papers:

SoK: The evolution of distributed dataset synchronization solutions in NDN

This paper by Philipp Moll, Varun Patil, Lan Wang, and Lixia Zhang systemizes the knowledge about distributed dataset synchronisation in ICN, or Sync in short, which, according to the authors, plays the role of a transport service in the Named Data Networking (NDN) architecture. A number of NDN Sync protocols have been developed over the last decade. For this paper, they conducted a systematic examination of NDN Sync protocol designs, identified common design patterns, revealed insights behind different design approaches,

and collected lessons learned over the years.

Sync enables new ways of thinking about coordination and general communication in distributed ICN systems, and I encourage everyone to read this for a good overview of the different proposed systems and their properties.

There are also some open research questions around Sync, such as large-scale applicability, alternative to using Interest multicast for discovery and more – a good topic to work on!

DICer: distributed coordination for in-network computations

This paper by Uthra Ambalavanan, Dennis Grewe, Naresh Nayak, Liming Liu, Nitinder Mohan, and Jörg Ott is a nice product of the Piccolo project that had the pleasure to set up and co-lead.

Application domains such as automotive and the Internet of Things may benefit from in-network computing to reduce the distance data travels through the network and the response time. Information Centric Networking (ICN) based compute frameworks such as Named Function Networking (NFN) are promising options due to their location independence and loosely-coupled communication model.

However, unlike current operations, such solutions may benefit from orchestration across the compute nodes to use the available resources in the network better. In this paper, the authors adopted the State Vector Synchronization (SVS), an application dataset synchronization protocol in ICN, to enhance the neighborhood knowledge of in-network compute nodes in a distributed fashion. They designed distributed coordination for in-network computation (DICer) that assists the service deployments by improving the resolution of compute requests.

Kua: a distributed object store over named data networking

This paper by Varun Patil, Hemil Desai, and Lixia Zhang decribes a distributed object store in NDN.

Applications such as machine learning training systems or log collection generate and consume large amounts of data. Object storage systems provide a simple abstraction to store and access such large datasets. These datasets are typically larger than the capacities of individual storage servers, and require fault tolerance through replication. This paper presents Kua, a distributed object storage system built over Named Data Networking (NDN).

The data-centric nature of NDN helps Kua maintain a simple design while catering to requirements of storing large objects, providing fault tolerance, low latency and strong consistency guarantees, along with data-centric security.

ICN Applications and Wireless Networking

The session on ICN Applications and Wireless Networking features four papers:

N-DISE: NDN-based data distribution for large-scale data-intensive science

This paper by Yuanhao Wu, Faruk Volkan Mutlu, et al. describes an NDN for Data-Intensive Science Experiments (N-DISE).

To meet unprecedented challenges faced by the world’s largest data- and network-intensive science programs, the authors designed and implemented a new, highly efficient and field-tested data distribution, caching, access and analysis system for the Large Hadron Collider (LHC) high energy physics (HEP) network and other major science programs. They developed a hierarchical Named Data Networking (NDN) naming scheme for HEP data, implemented new consumer and producer applications to interface with the high-performance NDNDPDK forwarder, and buildt on recently developed high-throughput NDN caching and forwarding methods.

The experiemts in this paper include delivering LHC data over the wide area network (WAN) testbed at throughputs exceeding 31 Gbps between Caltech and StarLight, with dramatically reduced download time.

Building a secure mHealth data sharing infrastructure over NDN

In this paper Saurab Dulal, Nasir Ali, et al. describes an NDN-based mHealth system called mGuard.

Exploratory efforts in mobile health (mHealth) data collection and sharing have achieved promising results. However, fine-grained contextual access control and real-time data sharing are two of the remaining challenges in enabling temporally-precise mHealth intervention. The authors have developed an NDN based system called mGuard to address these challenges. mGuard provides a pub-sub API to let users subscribe to real-time mHealth data streams, and uses name-based access control policies and key-policy attribute-based encryption to grant fine-grained data access to authorized users based on contextual information.

Delay-tolerant ICN and its application to LoRa

I have co-authored this paper together with Peter Kietzmann, José Alamos, Thomas C. Schmidt, and Matthias Wählisch.

Connecting low-power long-range wireless networks, such as LoRa, to the Internet imposes significant challenges because of the vastly longer round-trip-times (RTTs) in these constrained networks. In our paper on "Delay-Tolerant ICN and Its Application to LoRa" we present an Information-Centric Networking (ICN) protocol framework that enables robust and efficient delay-tolerant communication to edge networks, including but not limited to LoRa. Our approach provides ICN-idiomatic communication between networks with vastly different RTTs for different use cases. We applied this framework to LoRa, enabling end-to-end consumer-to-LoRa-producer interaction over an ICN-Internet and asynchronous ("push") data production in the LoRa edge. Instead of using LoRaWAN, we implemented an IEEE 802.15.4e DSME MAC layer on top of the LoRa PHY layer and ICN protocol mechanisms in the RIOT operating system.

For our experiments, we connected constrained LoRa nodes and gateways on IoT hardware platforms to a regular, emulated ICN network and performed a series of measurements that demonstrate robustness and efficiency improvements compared to standard ICN.

iCast: dynamic information-centric cross-layer multicast for wireless edge network

This paper by Tianlong Li, Tian Song, Yating Yang, and Jike Yang presents iCast, short for dynamic information-centric multicast, to enable dynamic multicast in the link layer.

Native multicast support in Named Data Networking (NDN)

is an attractive feature, as multicast content delivery can reduce the redundant traffic and improve the network performance, especially in wireless edge networks. With their visibility into Interest and Data names, NDN routers automatically aggregate the same requests from different end hosts and establish network-layer multicast. However,

the current link-layer multicast based on host-centric MAC address management is inflexible. Consequently, supporting NDN dynamic multicast with the current link-layer architecture remains a challenge.

iCast enables dynamic multicast in the link layer based on three main contributions:

- iCast integrates NDN native multicast with the host-centric link layer while maintaining the host-centric properties of the current link layer.

- iCast achieves per-packet dynamic multicast in the link layer, and the authors further propose a hash-based iCast variant for dynamic connection.

- iCast has been implemented in a real testbed, and the evaluation results show that iCast reduces up to 59.53% traffic compared with vanilla NDN. iCast bridges the gap between NDN multicast and the host-centric link-layer multicast.

Unlocking REST with Information-Centric Networking

Web applications today utilize the Representational State Transfer (REST) architecture pattern, depending on HTTP, TLS, and either TCP or QUIC as the protocol substrate to build upon. The resulting protocol stacks can be quite complex, and the RESTful communication is locked into channel-like connections of the respective transport protocol.

Given that most web applications are concerned with transferring named units of data (web resources, video chunks etc.), we asked ourselves: can the REST paradigm be married with the data-oriented, receiver-driven operation of Information-Centric Networking (ICN), leveraging attractive ICN benefits such as consumer anonymity, stateful and symmetric forwarding, flow-balance in-network caching, and implicit object security?

We argue that this is feasible given some of the recent advances in ICN protocol development and that the resulting suite is simpler and potentially having better performance and robustness properties. Our sketch of an ICN based protocol framework addresses secure and efficient establishment and continuation of REST communication sessions, without giving up key ICN properties, such as consumer anonymity and flow balance.

Representational State Transfer in the Web Today

The Web today is based on an extended version of the Representational State Transfer (REST) architecture pattern for client-server interaction. This simple model has been extended and applied to HTTP for web applications by supporting not only retrieval, but also creation, processing, and deletion of data. Real-world REST systems employ additional concepts and mechanisms such as security and privacy, support for application sessions, and have various optimizations to eliminate unnecessary round-trips.

REST and ICN

Since nearly all web applications today are based on the RESTful client-server communication model, the question then occurs how such interactions can be achieved in ICN, i.e., secure and confidential RESTful access to web resources, with support for efficient handling of a sequence of interactions in a session-like context.

The applicability of ICN's Interest/Data interaction to modern web applications that provide a significant amount of data in requests headers for cookies and other request parameters has been assessed by Moiseenko et al., concluding that it is not immediately clear how to use ICN effectively for web communication. We have also argued in our earlier RICE paper on Remote Method Invocation in ICN that the basic Interest/Data exchange model of CCNx/NDN-style ICN is not sufficient and that certain use cases (e.g., sending resource representations or request parameters from a client to a server) should not be implemented by overloading the Interest message.

In draft-oran-icnrg-reflexive-forwarding, we have discussed the specific problems extensively. In its default mode, ICN also lacks name privacy, which we consider essential for any real-world application of ICN to web services. However, various techniques have been developed to improve name privacy in ICN, such as the onion routing approach in ANDaNA (Anonymous Named Data Networking Application).

In our vision paper on RESTful Information-Centric Networking at [ACM ICN-2022 (https://conferences2.sigcomm.org/acm-icn/2022/), we argue that an ICN-based RESTful programming model that overcomes these limitations is feasible given some of the recent advances in ICN protocol development and provide the outline of the corresponding protocol framework.

HTTP has been extended and partially redesigned over time, and provides its own idiosyncratic conventions and mechanisms, e.g., which request-relevant information to represent in the URI vs. message headers vs. message bodies. The goal of this work is not to simply map current HTTP mechanisms to ICN, but rather to provide an ICN-idiomatic platform for RESTful applications including an Information-Centric web.

Any ICN web platform will only be useful and relevant if it provides equivalent (or better) security and privacy properties as the state-of-art, i.e., HTTP3 over QUIC and TLS 1.3, so our proposed framework provides a TLS-like security context for RESTful communication (sessions). Also, RESTful ICN should not compromise on existing ICN benefits such as consumer anonymity and consumer mobility.

Our technical design integrates CCNx Key Exchange (a TLS-1.3-like key exchang protocol for ICN) and our Reflexive Forwarding scheme for ICN, and uses that for providing symmetric key derivation and efficient RESTful communication and session resumption in an ICN-idiomatic way. Please check out our paper for details.

References

- Dirk Kutscher and David Oran. 2022; RESTful information-centric networking: statement; In Proceedings of the 9th ACM Conference on Information-Centric Networking (ICN '22); Association for Computing Machinery, New York, NY, USA, 150–152. https://doi.org/10.1145/3517212.3558089

- ACM ICN-2022

- David Oran and Dirk Kutscher; Reflexive Forwarding for CCNx and NDN Protocols; Internet Draft draft-oran-icnrg-reflexive-forwarding, Work in Progress

- Marc Mosko, Ersin Uzun, Christopher A. Wood; CCNx Key Exchange Protocol Version 1.0; Internet Draft draft-wood-icnrg-ccnxkeyexchange-02, Work in Progress; January 2018

Information-Centric Dataflow: Re-Imagining Reactive Distributed Computing

The Dataflow paradigm is a popular distributed computing abstraction that is leveraged by several popular data processing frameworks such as Apache Flink and Google Dataflow. Fundamentally, Dataflow is based on the concept of asynchronous messaging between computing nodes, where data controls program execution, i.e., computations are triggered by incoming data and associated conditions. This typically leads to very modular system architectures that enable re-use, re-composition, and parallel execution naturally. Most of the popular distributed processing frameworks today are implemented as overlays, i.e., they allow for instantiating computations and for inter-connecting them, for example by creating and maintaining communication channels between nodes such as system processes and microservices.

Connections and Overlays

The connection-based approach incurs several architectural problems and inefficiencies, for example: application logic is concerned with receiving and producing data as a result of computation processes but connections imply transport endpoint addresses that are typically not congruent. This typically implies a mapping or orchestration system. One key goal for Dataflow systems is to enable parallel execution, i.e., one computation is run in parallel, which also affects the communication relationships with upstream producers and downstream consumers. For example, when parallelizing a computation step, it typically implies that each instance is consuming a partition of the inputs instead of all the inputs. An indirection- and connection-based approach makes it harder to configure (and especially to dynamically re-configure) such dataflow graphs.

In some variants of Dataflow, for example stream processing, it can be attractive if one computation output can be consumed by multiple downstream functions. Connection-based overlays typically require duplicating the data for each such connection, incurring significant overheads. In large-scale scenarios, the computation functions may be distributed to multiple hosts that are inter-connected in a network. Orchestrators may have visibility into compute resource availability but typically have to treat the TCP/IP network as a blackbox. As a result, the actual data flow is locked into a set of overlay connections that do not necessarily follow optimal paths, i.e., the communication flows are incongruent with the logical data flows.

IceFlow: Information-Centric Dataflow

In our ACM ICN 2021 paper Vision: Information-Centric Dataflow – Re-Imagining Reactive Distributed Computing, we present IceFlow – an Information-Centric Dataflow system approach that supports traditional Dataflow with Information-Centric principles and that can be used as a drop-in replacement for existing Dataflow-based frameworks.

In addition to the paper, we also show a live of a joint optimization of computing and networking resources in IceFlow: Decentralized ICN-based dataflow system implementation.

IceFlow’s objectives are:

- reducing complexity in Dataflow systems by removing connection-based overlays and corresponding orchestration requirements;

- enabling efficient communication by reducing data duplication; and

- enabling additional improvements through more direct communication and caching in the network.

IceFlow is employing access to authenticated data in the network as per CCNx/NDN-based ICN for the communication between computation functions and provides additional features such as flowcontrol, partitions for data streaming, and a window concept for synchronizing computations in streaming pipelines. The contributions of this paper are:

- an ICN naming scheme for Dataflow;

- a concept for receiver-driven flow control in IceFlow-based Dataflow systems and for dealing with parallel processing in IceFlowbased Dataflow systems; and

- a prototype implementation.

Links

Re-Thinking LoRaWAN

Low-power, long-range radio systems such as LoRaWAN represent one of the few remaining networked system domains that still feature a complete vertical stack with special link- and network layer designs independent of IP. Similar to local IoT systems for low-power networks (LoWPANs), the main service of these systems is to make data available at minimal energy consumption, but over longer distances. LoRaWAN (the system that comprises the LoRa PHY and MAC) supports bi-directional communication, if the IoT device has the energy budget. Application developers interface with the system using a centralized server that terminates the LoRaWAN protocol and makes data available on the Internet.

While LoRaWAN applications are typically providing access to named data, the existing LoRaWAN stack does not support this way of communicating. LoRaWAN is device-centric and is generally designed as a device-to-server messaging system – with centralized servers that serve as rendezvous point for accessing sensor data. The current design imposes rigid constraints and does not facilitate accessing named data natively, which results in many point solutions and dependencies on central server instances.

In our demo paper & presentation at ACM ICN-2020, we are therefore describing how Information-Centric Networking could provide a more natural communication style for LoRa applications and how ICN could help to conceive LoRa networks in a more distributed fashion compared to todays mainstream LoRaWAN deployments. For LoWPANs (e.g., 802.15.4 networks), ICN has already demonstrated to be an attractive and viable alternative to legacy integrated special purpose stacks – we believe that

LoRa communication provides similar opportunities.

Watch my Peter Kietzmann's talk about it here:

ACM ICN-2020 Highlights

ACM ICN-2020 took place online from September 29th to October 1st 2020. This is a quick summary of the main technical highlights from my personal perspective. Overall, it was a high-quality event, and it was great to see the progress that is being made by different teams. Here, I am focusing specifically on Architecture, Content Distribution, Programmability, and Performance. If you are interested in the complete program, all papers, presentation material, and presentation videos are available on the conference website.

Architecture

The Information-Centric Networking concept can be implemented in different ways (and some people would argue that some overlay systems for content distribution and data processing are essentially information-centric). ICN systems have often been associated with clean-slate approaches, requiring difficult to imagine fork-lift replacement of larger parts of the infrastructure. While this has never the case (because you can always run ICN protocols over different underlays or directly map the semantics to IPv6), it is still interesting to learn about new approaches and to compare existing data-oriented frameworks to pure ICN systems.

Named-Data Transport

In their paper Named-Data Transport: An End-to-End Approach for an Information-Centric IP Internet (Presentation) Abdulazaz Albalawi and J. J. Garcia-Luna-Aceves have developed an alternative implementation of the accessing named data concept called Named-Data Transport (NDT) that can leverage existing Internet routing and DNS, while still providing the general properties (accessing named-data securely, in-network caching, receiver-driven operation).

The system is based on three components: 1) A connection-free reliable transport protocol, called Named Data Transport Protocol (NDTP), 2) a DNS extension (my-DNS) for manifest records that describe content items and their chunks, and 3) NDT Proxies that act as transparent caches and that track pending requests, similar to ICN forwarders, but at the transport layer.

In NDT, content names are based on DNS domain names, and each name is mapped to an individual manifest record (in the DNS). These records provide a mapping to a list of IP addresses hosting content replicas. When requesting such records, the idea is that the system would be able apply similar traffic steering as today's CDNs, i.e., provide the requestor with a list of topologically close locations. Producers would be responsible for producing and publishing such manifests.

The Named Data Transport Protocol (NDTP) is a receiver-driven transport protocol (on top of UDP) used by consumers and NDT Proxies which behave logically like ICN forwarders. There is more to the whole approach (such as security, name privacy etc.).

In my view, NDT is an example of a resolution-based ICN system with interesting ideas for deployability. In principle, resolution-based ICN has been pursued by other approaches before (such as NetInf). In general, these systems have a better initial deployment story at the cost of requiring additional infrastructure (and resolution steps during operation.)

RESTful Information-Centric Web of Things

In the Internet of Things, ICN has demonstrated many benefits in terms of reduced code complexity, better data availability, and reduced communication overhead compared to many vertically integrated IoT stacks and location/connection-based protocols.

In their paper Toward a RESTful Information-Centric Web of Things: A Deeper Look at Data Orientation in CoAP (presentation), Cenk Gündoğan, Christian Amsüss, Thomas C. Schmidt, and Matthias Wählisch compare a CoAP and OSCORE (Object Security for Constrained RESTFul Environments) based network of CoAP clients, servers, and proxies with a corresponding NDN setup.

The authors investigated the possibility of building a restful Web of Things that adheres to ICN first principles using the CoAP protocol suite (instead of a native ICN protocol framework). The results showed, since CoAP is quite modular and can be used in different ways, this is indeed possible, if one is willing to give up strict end-to-end semantics and to introduce proxies that mimic ICN forwarder behavior. (The paper reports on many other things, such as extensive performance measurements and comparisons.)

In my view, this is an interesting Gedankenexperiment, and there was a lively discussion at the conference. One of the discussion topics was the question how accurate the comparison really is. For example, while is is possible to construct a CoAP proxy chain that mimics ICN behavior, real-world scenarios would require additional functionality in the CoAP network (routing, dealing with disruptions etc.) that might lead to a different level of complexity (that would possibly be less pronounced in an native ICN environment).

Still, the important take-away of this paper is that some applications of CoAP & OSCORE exhibit information-centric properties, and it is an interesting question whether, for a green-field deployment, the user would not be better served by a native ICN approach.

Content Distribution

Content Distribution and ICN have a long history, sometimes challenged by some misunderstandings. Because one of the early ICN approaches was called Content-Centric Networking (CCN), it was often assumed that ICN would disrupt or replace Content Distribution Networks (CDNs) or that it was a CDN-like technology.

While ICN will certainly help with large-scale content distribution and potentially also change/simplify CDN operations, the core idea is actually about accessing named data securely as a principal network service -- for all applications (that's why Named Data Networking -- NDN -- is a better name).

Managed content distribution as such will continue to be important, even in an ICN world. Surely, it will enjoy better support from the network as today's CDN can expect, thus enabling new exciting applications and simplifying operations, but I prefer avoiding the notion of ICN replacing CDN.

When looking at actual networks and applications today, it is fair to say that almost nothing works without CDN. What we are seeing today is hyperscalers and essentially all the (so-called) OTT video providers extending their systems into ISP networks, by simply shipping standalone edge caches such as Netflix OCA servers as standalone systems to ISPs.

Each of these providers have their own special requirements of how to map customers to edge caches, how to implement traffic steering etc, which is painful enough for operators already. I expect this to become even more pressing as we shift more and more linear live TV to the Internet. Flash-crowd audiences such as viewers of UEFA Champions' League matches will require a massive extension of the already extensive edge caching infrastructure and require massive investments but also significant complexity with respect to traffic steering and guaranteeing a decent viewing experience.

In that context, it is no wonder that people try to resort to IP-Multicast for ensuring a more scaleable last-mile distribution such as this proposal by Akamai and others. Marrying IP-Multicast with a CDN-overlay is (IMO) not exactly complexity reduction, so I think we are now at a tipping point where the Internet in terms of concepts and deployable physical infrastructure can provide many cool services, but where the limited features of the network layers requires a prohibitive amount of complexity -- to an extend where people start looking for better solutions.

At ICN-2020, CDN was thus discussed quite extensively again -- with many interesting, complementary contributions.

Keynote by Bruce Maggs on The Economics of Content Distribution

We were extremely happy to have Bruce Maggs (Emerald Innovations, on leave from Duke University, ex NEC researcher, one of the founding employees of Akamai) delivering his keynote on the Economics of Content Delivery. In his talk Bruce explained different economic aspects (flow of payments, cost of goods sold) but also challenges for different CDN services such as live-streaming.

The take-aways for ICN were:

- Incentives and cost must be aligned

- Performance benefits from caching

- Reducing latency is valuable to content providers

- Reducing network is valuable to ISPs.

- If there was caching at the core (in addition to the edge)

- What is the additional benefit?

- Who pays for that?

- Protocol innovation is still possible

- In the past, people thought that HTTP/TLS/TPC/IP is difficult to overcome

- QUIC demonstrates that new protocols can be introduced

The socio-economic discussion resonated quite well with me, as some of earlier ICN projects in Europe tried to address these aspects relatively early in 2008. I believe this was due to the operator and vendor influence at the time. In retrospect, I would say that the approaches at that time were possibly too much top-down and premature (trying to revert value chains and find new business models). It is only now that we understand the economics of CDN, its complexity and real cost that (in my view) represent barriers to innovation -- and that we can start to imagine actually implementing different systems.

Far Cry: Will CDNs Hear NDN's Call?

In their paper Far Cry: Will CDNs Hear CDN's Call? (presentation), Chavoosh Ghasemi, Hamed Yousefi, and Beichuan Zhang tried to compare NDN with enterprise CDN (a particular variant of CDN) with respect to caching and retrieval of static contents.

In their work, the authors deployed an adaptive video streaming service over three different networks: Akamai, Fastly, and the NDN testbed. They had users in four different continents and conducted a two-week experiment, comparing Quality of Experience, Origin workload, failure resiliency, and content security.

I cannot summarize of all of the results here, but the conclusions by the authors were:

- CDNs outperform the current NDN testbed deployment in terms of QoE (achievable video resolution in a DASH-setting)

- Origin workload and failure resiliency are mainly the products of the network design -- and the NDN testbed outperforms current CDNs

- More as an interpretation: NDN can realize a resilient, secure, and scalable content network given appropriate software and protocol maturity and hardware resources.

The paper was discussed intensively at the conference , for example, it was debated how comparable the plain NDN testbed and its network service really are -- to a production-level CDN.

In my view, the value of this paper lies in the created experiment facilities and the attempt to establish some ground truth (based on current NDN maturity). I hope that this work can leverage by more experiments in the future.

iCDN: An NDN-based CDN

In their paper iCDN: An NDN-based CDN (presentation), Chavoosh Ghasemi, Hamed Yousefi, and Beichuan Zhang (i.e., the same authors), pursue a more forward-looking approach. In this paper, they develop a CDN service based on ICN mechanisms, i.e., trying to conceive a future CDN system that does not need to take the current network's limitations into account.

One of the interesting ICN properties is that the main service of accessing named data does not require any notion of location. Sometimes people assume that an Information-Centric system always needs to map names to locators such as IP addresses, but this is a really limited view. Instead, it is possible to build the network solely on forwarding INTERESTs for named data based on forwarding information of that same namespace. A forwarder may have more than forwarding info base entry for the same name -- from a consumer (application) perspective these are completely equivalent.

Because of intrinsic object security, it does not matter from which particular host a content object is served. There can be several copies -- all equivalent. When creating copies of original content, e.g., by cloning a data repository, the new copy needs to be announced (by injecting routing information) , and from that point on, it is reachable without any additional management, configuration or other out-of-band mechanisms.

When applying this notion to CDN scenarios, it is easy to understand the simplification opportunities. In ICN, content repositories can be added to the network, and in-network name-based forwarding will find the closest copy automatically.

For iCDN, the authors have leveraged this basic notion and built an ICN-based CDN that does not need any client-to-cache mapping and overlay routing mechanisms. Based on that, iCDN features logical partitions and cache hierarchies for content namespaces (for acknowledging that there may be different CDN providers, hosting different content services).

iCDNs employ cache hierarchies to exploit on-path and off-oath caches without relying on application-layer routing functions. The idea was to provide a scalable, adaptive solution that can cope with dynamic network changes as well as dynamic changes in content popularity.

There are more details to this approach, and of course the debate on what is the best ICN-based CDN design has just started. Still, this paper is an interesting contribution in my view, because it illustrates the opportunities for rethinking CDN nicely.

Programmability

Programmability and ICN has two facets: 1) Implementing distributed computing with ICN (for example as in CFN -- Compute-First Networking) and 2) implementing ICN with programmable infrastructure. ACM ICN-2020 has seen contributions in both directions.

Result Provenance in Named Function Networking

In their paper Result Provenance in Named Function Networking (presentation), Claudio Marxer and Christian Tschudin have leveraged their previous work on Named Function Networking (NFN) and developed a result provenance framework for distributed computing in NFN.

In this work, the authors augmented NFN with a data structure that creates transparency of the genesis of every evaluation results so that entities in the system can ascertain result provenance. The main idea is the introduction of so-called provenance records that capture meta data about the genesis of the computation result. The paper discusses integration of these records into NDN and procedures for provenance checks and trust computation.

In my view, the interesting contribution of this work is the illustration of how the general concept of provenance verification can be implemented in a data-oriented system such as the ICN-based Named Function Networking framework. The results may be (so some extend) to other ICN-based in-network computing systems, so I hope this paper will start a thread of activities on this subject.

ENDN: An Enhanced NDN Architecture with a P4-programmable Data Plane

In their paper ENDN: An Enhanced NDN Architecture with a P4-programmable Data Plane (presentation), Ouassim Karrakchou, Nancy Samaan, and Ahmed Karmouch present an NDN system that is implemented in a P4-programmable data plane, i.e., a system in which applications can interact with a control plane that configures the data plane according to the required services.

The work in this paper is based on the notion that applications specify their content delivery requirements to the network, i.e., the control plane of a network. The control plane provide a catalogue of content delivery services, which are then translated into data plane configurations that ultimately get installed on P4 switches.

Examples of such services include Content Delivery Pattern services (whether the system is based on INTEREST/DATA or some stateful data forwarding), Content Name Rewrite services (enabling the network to rewrite certain names in INTERESTs), Adaptive Forwarding services (next-hop selection) etc.

In my view, this paper is interesting because it provides a relatively advanced perspective of how applications specify required behavior to a programmable ICN network. Moreover, the authors implemented this successfully on P4 switches and described relevant lessons learned and achievements in the paper.

Performance

Performance has historically always been an interesting topic in ICN. On the one hand, ICN provides substantial performance increases in the network due to its forwarding and caching features. On the other hand, it has been shown that implementing an ICN forwarder that operates at modern network line-speeds is challenging.

NDN-DPDK: NDN Forwarding at 100 Gbps on Commodity Hardware

In their paper NDN-DPDK: NDN Forwarding at 100 Gbps on Commodity Hardware (presentation), Junxiao Shi, Davide Pesavento, and Lotfi Benmohamed present their design of a DPDK-based forwarder.

The authors have developed a complete NDN implementation that runs on real hardware and that supports the complete NDN protocol and name matching semantics.

This work is interesting because the authors describe the different optimization techniques including better algorithms and more efficient data structures, as well as making use of the parallelism offered by modern multi-core CPUS and multiple hardware queues with user-space drivers for kernel-bypass.

This work represents the first software forwarder implementation that is able to achieve 100 Gpbs without compromises in NDN protocols semantics. The authors have published the source at https://github.com/usnistgov/ndn-dpdk.

ACM ICN-2019 Highlights

ACM ICN-2019 took place in the week of September 23 in Macau, SAR China. The conference was co-located with Information-Centric-Networking-related side events: the TouchNDN Workshop on Creating Distributed Media Experiences with TouchDesigner and NDN before and an IRTF ICNRG meeting after the conference. In the following, I am providing a summary of some highlights of the whole week from my (naturally very subjective) perspective.

Applications

ICN with its accessing named data in the network paradigm is supposed provide a different, hopefully better, service to application compared to the traditional stack of TCP/IP, DNS and application-layer protocols. Research in this space is often addressing one of two interesting research questions: 1) What is the potential for building or re-factoring applications that use ICN and what is the impact on existing designs; and 2) what requirements can be learned for the evolution of ICN, what services are useful on top of an ICN network layer, and/or how should the ICN network layer be improved.

Network Management